The landscape of digital product design is shifting from tedious pixel-pushing to high-level creative direction. For years, the gap between a fleeting hand-drawn idea and a high-fidelity prototype was a bridge built of hours of manual labor in vector tools. However, the emergence of sketch to image technology has fundamentally altered this workflow. By allowing designers to input rough outlines and receive polished visual assets in seconds, sketch to image acts as a bridge between human intuition and machine precision, enabling a “fail fast, iterate faster” mentality that was previously impossible.

How Sketch to Image is supercharging UI/UX design workflows

Why Sketch to Image is a Game-Changer for UI/UX

The transition from a brainstorming session to a client-ready presentation often suffers from “innovation leakage,” where the original energy of a sketch is lost in the technical constraints of design software. This technology solves that by preserving intent while automating execution.

Rapid Visualization of Low-Fi Concepts

Traditionally, moving from a paper wireframe to a digital mockup required recreating every element from scratch. With AI-driven rendering, a rough doodle of a mobile dashboard can be transformed into a realistic interface instantly. This allows designers to see the visual weight, balance, and color harmony of a concept before committing to a single line of code or a complex Figma component.

Reducing Cognitive Load During Brainstorming

When designers focus too much on “how” to draw a button or align a grid, they lose focus on the “why” of the user experience. By offloading the rendering process to an AI, the designer remains in a state of flow. You focus on the spatial logic and user journey through your sketches, while the AI handles the aesthetic polish, textures, and lighting environments.

Democratizing Design Feedback

Stakeholders often struggle to interpret “squiggles” on a whiteboard. By converting these sketches into realistic product renders, designers can present ideas that look and feel like finished products. This leads to more accurate feedback early in the process, preventing expensive mid-development pivots and ensuring that everyone—from product managers to developers—is aligned on the visual vision.

Transformation Scenarios: Integrating ColorifyAI into Your Workflow

Integrating this technology isn’t just about making pretty pictures; it’s about strategic efficiency. Whether you are in the discovery phase or the final polish, ColorifyAI sketch to image AI provides the technical backbone to turn abstract lines into concrete design solutions.

Transformation Scenarios: Integrating ColorifyAI into Your Workflow

Early-Stage Wireframing and Layout Exploration

In the initial stages of UI design, layout is king. You can sketch various navigation patterns—sidebar vs. bottom bar—and use ColorifyAI to apply different design systems to these layouts instantly. By seeing how a layout looks in “Dark Mode” or “Glassmorphism” through a simple sketch, you can make informed structural decisions in minutes rather than days.

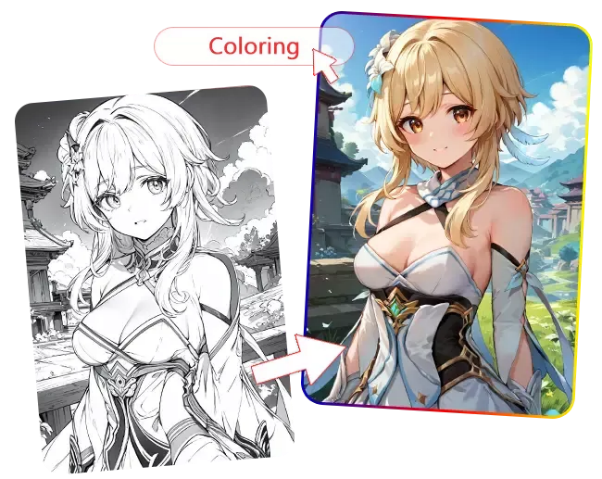

14 Distinct Styles for Unique Results

Transform your drawings into vivid artwork with 14 creative options, including Anime, Ghibli, Manga, Webtoon, and more. This sketch to color feature allows you to experiment with different aesthetics, textures, and moods, instantly visualizing your ideas in multiple artistic directions without redrawing your original sketch.

Creating Unique Branding Elements and Icons

UI/UX isn’t just about layouts; it’s about the micro-details. Designers often need bespoke icons or decorative elements that don’t exist in standard libraries. By sketching a unique shape or a brand mascot and running it through the sketch-to-image pipeline, you can generate high-fidelity assets that perfectly match the brand’s aesthetic, complete with realistic shadows and materials.

Exploring Texture and Material Design

For apps that require a high degree of tactility—such as fintech apps wanting a “metallic” feel or wellness apps seeking “organic” textures—sketching the basic form and defining the material via AI is incredibly efficient. ColorifyAI allows you to specify “brushed aluminum” or “soft frosted glass” over your sketch, providing a level of material depth that is difficult to achieve manually.

Generating High-Fidelity Placeholders for Mockups

We’ve all used “lorem ipsum” images that don’t quite fit the vibe of a design. With this technology, you can sketch the specific type of photography or illustration you need for a hero section. ColorifyAI will generate a custom image that fits the exact composition of your sketch, ensuring the placeholder actually enhances the design rather than distracting from it.

Testing Responsive Breakpoints Visually

Sketching how a component collapses from desktop to mobile is one thing; seeing it rendered is another. You can provide sketches of the same component in different aspect ratios, and the AI will maintain visual consistency across them. This helps in identifying whether a certain aesthetic works as well on a small screen as it does on a wide-screen dashboard.

Key Features of ColorifyAI Sketch to Image

What sets ColorifyAI apart in the crowded field of generative AI is its commitment to designer-centric precision and output quality. It isn’t just a general art generator; it is a tool tuned for professional creative workflows.

High-Precision Edge Detection and Control

ColorifyAI utilizes advanced neural networks that respect the “sanctity of the line.” Unlike models that take too much creative liberty, this tool ensures that your structural boundaries remain intact. If you draw a 1px border for a card, the AI understands that as a structural constraint, ensuring the resulting render fits perfectly within your intended UI grid.

4K Resolution and Professional-Grade Texturing

For designers, resolution is non-negotiable. ColorifyAI offers high-definition output that is suitable for presentation decks and high-res displays. Beyond pixels, the texturing engine is sophisticated enough to handle complex light interactions, such as sub-surface scattering for skin or realistic refractions for glass elements in a UI.

Style Consistency and Reference Tuning

One of the biggest hurdles in AI design is maintaining a consistent “look” across multiple screens. ColorifyAI allows users to set style references. You can upload a brand’s style guide or a mood board, and the sketch-to-image engine will synthesize the sketch using the specific color palettes and artistic vibes of those references, ensuring your “Dashboard” and “Settings” pages look like they belong to the same app.

Intuitive User Interface for Non-Technical Creatives

The tool is designed with a “design-first” philosophy. You don’t need to be a prompt engineering expert to get great results. The interface allows for a natural interaction where you upload your sketch, select a few refined parameters (like “minimalist,” “isometric,” or “photorealistic”), and let the engine do the heavy lifting. This lowers the barrier to entry for traditional designers who are new to AI.

Conclusion: A New Era of Creative Efficiency

The integration of AI into the UI/UX workflow is not about replacing the designer; it is about amplifying their potential. By mastering these techniques, you reclaim the time spent on repetitive rendering tasks and reinvest it into deep user research and creative problem-solving. As tools like ColorifyAI Sketch to Image continue to evolve, the distance between a “napkin sketch” and a “market-ready product” will continue to shrink, ushering in an era where the only limit to design is the speed of thought.